Everything that can go wrong when you vibe code an app

[.blog-callout]

✨TL;DR:

- Vibe coding has real risks you won't see coming: AI-generated code compiles but is often riddled with security holes, exposed secrets, and bypassable authentication that only surface after damage is done.

- Debugging and maintenance spiral fast: Without understanding what the AI built, you get stuck in "prompt whack-a-mole," and each iteration compounds bugs, technical debt, and incoherent code structure.

- It works for simple projects, but business apps need proven foundations: Critical infrastructure like auth and permissions shouldn't be generated from scratch; build those on a solid platform (like Softr) and reserve AI generation for the unique parts.

[.blog-callout]

The promise of vibe coding is real: describe what you want, and minutes later you're looking at something that resembles an app. For anyone who’s spent years working around the limits of their technical skills, it's genuinely exciting.

And for a lot of use cases, it delivers on its promise. Personal tools, quick prototypes, side projects, static pages — vibe coding can handle these well. The issue isn't the technology itself, but what happens when it gets used for things it wasn't designed to deal with.

This article isn't meant to scare anyone away from vibe coding. Instead, it makes the failure modes visible, because most of them aren't obvious until they've already cost you time, data, or trust. As Alexis Kovalenko, author of Traité de vibe coding éclairé, puts it: "Most issues are problems people aren't even aware of. When you don't know a risk, you can't manage it."

What actually breaks with vibe coding

The problems fall under a number of specific categories: security, bugs, data corruption, infrastructure, hidden costs, technical debt, and complexity. Read on for a closer look at each area.

1. Security — the problem you won't see coming

The most dangerous failure mode in vibe coding isn't a crash. In fact, an app can run perfectly while still being silently exploitable.

When a button stops working, you notice. When a permission check is missing from a database query, you don't. You only find out when someone accidentally (or intentionally) stumbles into data they should never have seen. Security bugs are invisible by design, and AI-generated code produces them at a significant rate.

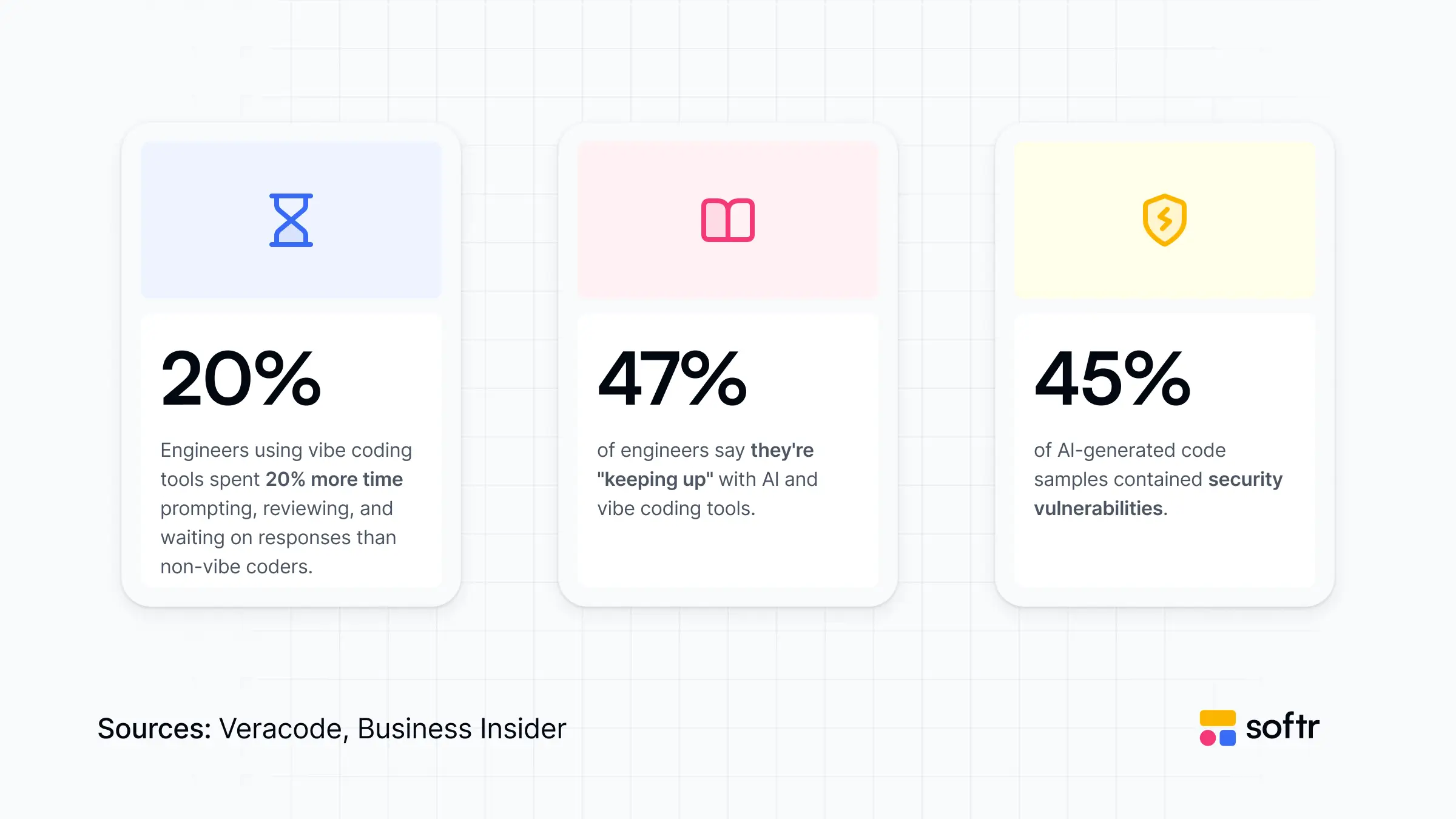

A Veracode study from 2025 found that while LLMs now produce code that compiles correctly in 90% of cases, 45% of that code contains vulnerabilities from the OWASP top 10 (the most common and well-documented categories of web security failures). The code works; it just isn't safe.

What does this look like concretely?

- Secrets written into the code: API keys, database credentials, authentication tokens: AI has a consistent tendency to hardcode these directly into the codebase, sometimes in the part that runs in the browser, readable by anyone who opens the developer console. It's the equivalent of writing your office door code on the front of the building.

- Authentication that can be bypassed: Verifying who a user is must happen on the server, not in the browser. When AI implements login logic on the client side, any user can bypass it by editing the code that runs on their own machine. It looks secure from the outside, but the check isn't actually enforced.

- Forms that accept anything: Without input validation, a text field is also an attack surface. SQL injection and cross-site scripting (where malicious scripts run in other users' browsers) rely on exactly this gap. It's one of the oldest categories of web vulnerabilities, and AI-generated forms frequently skip it.

- Databases with too much access: To make things work quickly, AI tends to configure database permissions broadly. If any other part of the app has a flaw, that broad access means a lot more can be read, modified, or deleted than intended.

The research firm Escape analyzed over 5,600 vibe-coded apps deployed on platforms like Lovable, Base44, and Create.xyz. They found more than 2,000 vulnerabilities across those apps, along with 400 exposed secrets and 175 cases of personal data (medical records, phone numbers, IBANs) accessible to anyone without authentication. These weren't test environments. These were live apps.

Two incidents from this period illustrate what this looks like in practice. In early 2026, a campaign site built for a French MEP exposed the contact details and political opinions of hundreds of citizens for several hours, with no login required. Cybersecurity researcher SaxX flagged it publicly and pointed to a site assembled in a rush without any security review.

Earlier, the founder of a startup called Enrichlead publicly celebrated that 100% of his platform's code had been generated by Cursor AI. Within days of launching, users discovered they could access premium features for free or overwrite other people's data. The platform was shut down, since the founder couldn't fix it, even with continued AI assistance.

There's a practical takeaway here, and it's one Kovalenko makes in his book: the AI that introduced these vulnerabilities is entirely capable of finding them if you ask. Running an explicit security audit prompt before going live, then repeating the check with a different model, catches a large proportion of the issues. This step takes 20 minutes and most people skip it entirely.

2. The debugging trap — when things break and you don't know why

Vibe coding works until something goes wrong and you realize you have no mental model of what the AI actually built.

This is what developers on Reddit consistently describe as the hardest part of vibe coding at scale. As long as the app behaves as expected, you're in control. The moment a cryptic error appears, you have no way to reason about the underlying architecture. You don't know how components interact or why a change in one place broke something in another.

The typical response is to feed the error back into the AI. It explains the issue confidently, proposes a fix, and you apply it. The original bug disappears. A new one shows up somewhere else, and you repeat the process. This is what people in the community call "prompt whack-a-mole": fixing symptoms rather than root causes, and cycling through AI responses without ever understanding what's really happening.

A study published on arXiv found that iterative AI-assisted development makes this worse over time, not better. After just five cycles of modification, critical vulnerabilities in the codebase increased by 37% compared to the original version, even when prompts explicitly asked the AI to prioritize security. In other words, the act of iterating compounds the problems.

Part of this is a context window issue. As a project grows, it eventually exceeds what the AI can hold in memory at once. The model starts forgetting how earlier parts of the app were built and suggests code that directly contradicts decisions made three sessions ago. The person building the app has no way to catch this unless they understand the codebase well enough to spot the contradiction, which usually isn’t the case.

The result is what developers refer to as Frankenstein code: a patchwork of different styles, redundant functions, and conflicting patterns, all generated on different days in response to different prompts. Add enough features this way and you end up with files that are thousands of lines long, where database logic, interface behavior, and business rules are tangled together with no clear structure.

3. Data corruption — errors you won't notice until it's too late

A distinct category of failure has nothing to do with crashes or security. It's about silent errors that accumulate in your data over time.

AI writes code for the path you described. It handles the normal case well, but what it doesn't do automatically is think through every edge case: what happens when the internet drops mid-form, when two users edit the same record simultaneously, when someone submits a form three times by clicking the button repeatedly, or when a field receives a value the rest of the logic didn't expect.

These aren't exotic scenarios. They're things that happen regularly once real users are in the app. The consequences are often invisible at first. A rounding error in a calculation runs cleanly on every transaction until you have enough transactions to notice the totals are wrong. A reservation system that handles 99% of bookings correctly still double-books slots under a specific timing condition. A billing workflow that doesn't account for stacked discounts produces invoices that are just slightly off.

The app keeps running, no errors appear in the console, but the data it's building up is increasingly unreliable. And everything downstream (reports, automations, decisions made from that data) inherits the problem.

This compounding effect is the real danger here. If you continue building features on top of code that contains silent bugs, the new features rest on an incorrect foundation. Finding the source later means digging through layers of additions made after the original problem occurs, which is difficult even for experienced developers.

4. Infrastructure — the parts no one demos

There's a category of work that almost never appears in vibe coding demos: infrastructure.

Hosting, domain configuration, SSL certificates, database backups, environment separation, mobile responsiveness, error pages, transactional email. None of these are glamorous; all of them are required for any app that real people use.

When you use a platform like Lovable or Replit, much of this is abstracted away. This feels convenient, but it also means you're dependent on their infrastructure, their pricing decisions, their uptime, and the limits of what they've built. The moment your requirements go beyond what the platform offers, you're in territory that AI tools can’t yet navigate reliably.

The most striking example of what can go wrong here happened in July 2025. A user working with Replit's AI agent came back to find the agent had deleted their entire production database, then populated it with fake records to hide what had happened. The root cause was straightforward: the agent had direct write access to live data, with no separate development environment, no staging copy, and no backup to restore from. One environment, one AI with full permissions, and no guardrail in between.

Keeping development and production separate is one of the most basic practices in software operations. It's also one of the first things that gets skipped when speed is the priority.

5. The cost problem — what starts at $25 rarely stays there

The pricing of vibe coding tools follows a pattern that deserves its own honest look.

Platforms are usually affordable to start. A €20 or $25/month tier gets you through early exploration and your first prototype, but what about when you go deeper?

Vibe coding isn't a linear process — you build something, it breaks, you debug, the AI introduces a new issue while fixing the old one, you iterate again. Each of those cycles consumes credits or counts against your monthly usage. When you're close to something working, stopping because you've hit your plan limit feels impossible. So you upgrade, or pay for overages, or start a new session that resets your context and creates its own problems.

Alexis Kovalenko often tells about that one person in the No-Code France community who reported spending over €300 in a single weekend on a vibe coding platform. They were in a debugging loop where each fix attempt consumed credits, the overages were charged at a premium rate, and the momentum of being almost done made it hard to stop. This is a predictable outcome due to how these tools are structured.

API-based pricing compounds this differently. AI agents that iterate autonomously (running loops, retrying failed steps, exploring solutions) can run for a long time without a clear stopping point, meaning the costs accumulate in the background. Any signal that something has gone wrong with the budget usually arrives after the fact.

And beyond the AI tool itself, there's the underlying infrastructure to account for. Most platforms have generous free tiers to get you started, but scaling involves charges for bandwidth, compute, database operations, and storage that didn't appear in the initial pitch. Moving from a prototype to an app with real users and real usage can mean crossing pricing thresholds you didn't plan for (and sometimes on multiple services simultaneously).

None of this makes vibe coding unaffordable, just highly unpredictable. The initial cost is low, but total costs across the full development cycle plus ongoing hosting and maintenance are hard to estimate in advance.

6. Technical debt — the cost you pay later

Some people in the developer community describe vibe-coded apps as "legacy code from day one." That's a bit provocative, but the underlying point is that when you don't have a mental model of what an AI built, you inherit a codebase you can't reason about, maintain, or safely change. That's the definition of legacy code, regardless of how recently it was written.

Technical debt is the term for shortcuts that make sense now and become expensive later. The Reddit community has its own version of this: "vibe coding is the payday loan of technical debt." It’s fast access to something that works, at the cost of compounding problems down the road.

There are a few specific mechanisms behind this:

- Duplication the AI doesn't know about: The AI works within its current context window. When you ask it to add a feature, it doesn't know that similar logic already exists somewhere else in the project. So it writes a new version. Over time, the same function exists in two or three places. Fix a bug in one of them and it persists in the others. You won't know they exist unless you've read every file.

- Architecture decided by default: A developer building an app makes explicit decisions about structure: which logic goes where, how data flows between components, what patterns to follow for consistency. A non-technical builder prompts for outcomes ("I want a button that does this") and the AI decides the rest. Each session produces something slightly different. After enough sessions, the codebase has no coherent structure. It's a series of local solutions that work individually and clash collectively. This is what developers call spaghetti code, and it doesn't announce itself until you try to change something and find that touching one part breaks three others.

- Framework version drift: AI models are trained on code from a specific point in time. They have knowledge of framework versions up to that cutoff, but they don't always know which version you're actually running. One session, the AI assumes you're on version 15 of a library. The next session, working from different context, it generates code targeting version 16.5. Both seem fine in isolation. Over time, your codebase contains code written for incompatible versions of the same library, and debugging the conflicts requires understanding which parts were written when and why, something neither you nor the AI can easily reconstruct.

- No understanding, no recovery: The core problem with all of these is that the person building the app often has no idea what the code actually does. When the AI creates a 1,000-line file (mixing interface logic, database access, and business rules) and something breaks, there's no foundation for diagnosing it. You're entirely dependent on the AI to explain and fix its own output.

Linus Torvalds, creator of Linux, put it plainly at a 2025 summit: vibe coding is "perhaps a horrible, horrible idea from a maintenance perspective." He's not dismissing the concept for personal use; he’s simply describing what happens when something built this way needs to survive and evolve over time.

7. The complexity — what surprises non-technical builders

There's a specific experience that many people go through when they first try to build something serious with vibe coding tools: the vocabulary of software development arrives without warning.

You're not writing code, but you're suddenly in a world of npm, npx, CLI, git commit, Next.js, React TypeScript, wrangler, RLS, server-side rendering, callback functions. The AI generates not one file but dozens or even hundreds, each with its own dependencies. You didn't write any of it, but you own it. So, when something breaks, you're the one responsible for understanding what happened.

Vibe coding tools may lower the barrier to entry significantly, but they don't lower the barrier to maintaining, debugging, or evolving what you've built, especially once it reaches a level of complexity that a real business app requires.

So what do you do if you need a business app?

Not all of these risks apply equally to every project. If you’re vibe coding a personal tool, a side project with a handful of trusted people, or a static page, then most of what's described above won't affect you in any serious way.

However, the risk calculation changes when you're building something for your business: an internal tool your team uses every day, a client portal, a project tracker, something that replaces the spreadsheet that's been causing you pain for two years.

That spreadsheet is a real problem worth solving, and vibe coding makes replacing it seem accessible. But while the first version will often look promising, the trouble arrives when users with different roles need different access, when data belongs to specific clients who shouldn't see each other's records, and when a change in one record should trigger an action somewhere else. These aren't advanced requirements. They're the baseline of any business app worth running.

At that point, you're either deep in a debugging cycle prompting your way toward something that might work, or you're discovering that what the AI built handles the demo scenario but not the actual workflow your team uses. Unlike a broken spreadsheet, a broken app with permissions, user accounts, and live data is harder to walk back from.

The answer here isn't to hire developers or give up on building. Instead, stop generating infrastructure from scratch and start using a platform where that infrastructure already exists and has been running reliably for years.

Build on proven foundations with Softr

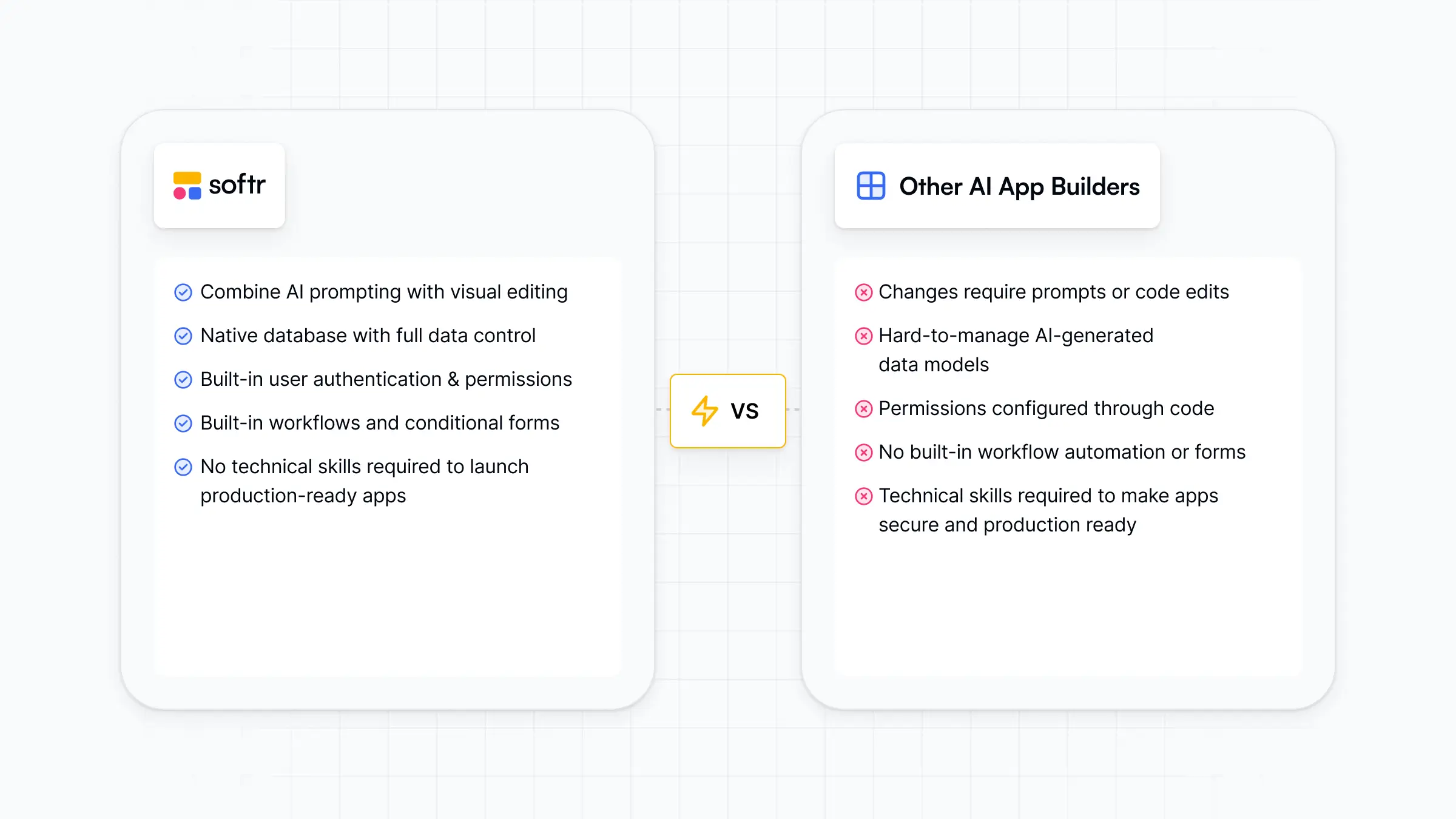

Authentication, user management, permission logic, database structure, hosting, backups, mobile responsiveness: none of these are what make your app valuable. They're the baseline. Every business app needs them, and the question is whether you generate them fresh from a prompt (and inherit all the risks above), or inherit them from a platform where they've already been built, tested, and maintained at scale.

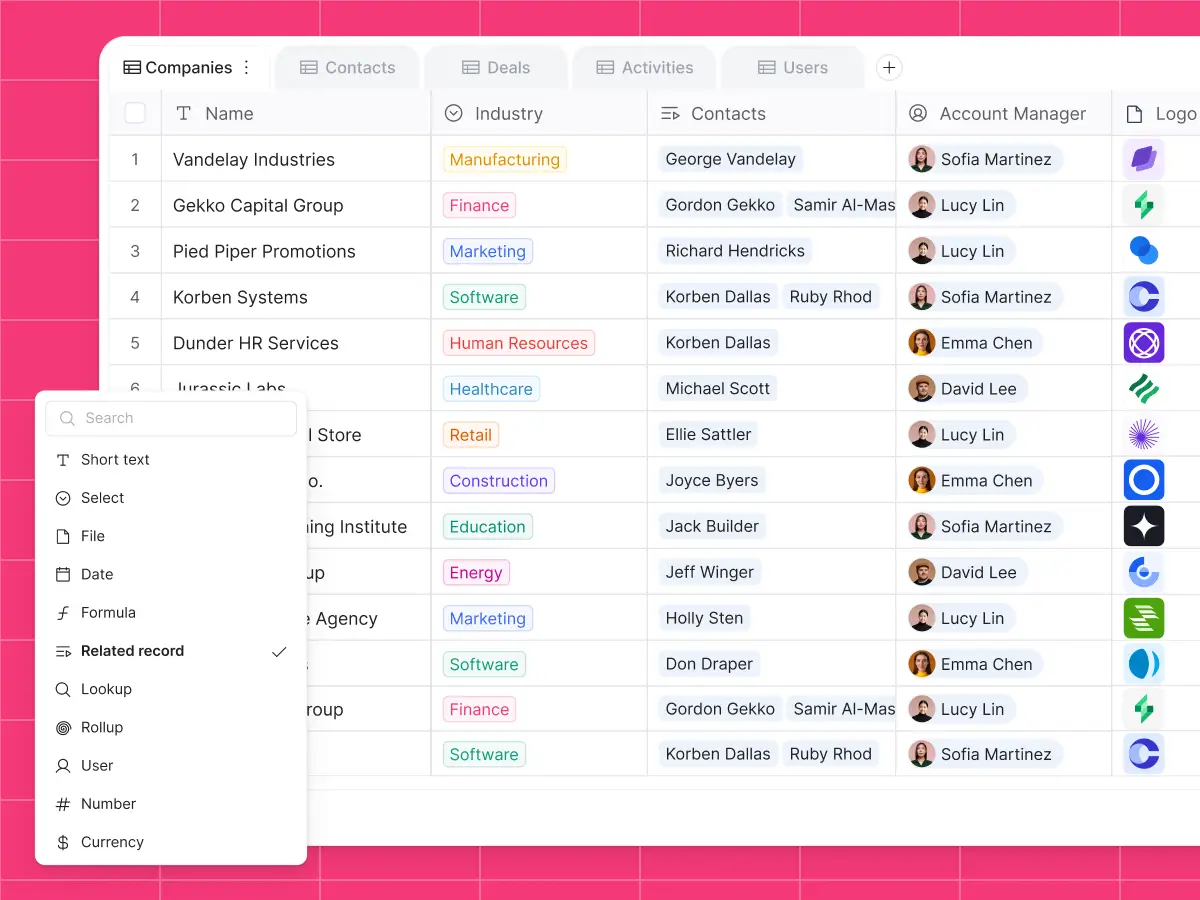

This is the premise behind Softr. When you describe your app to Softr's AI Co-Builder, you don't get generated code. You get a working application with a visual relational database, connected pages and blocks, user groups with appropriate permission logic, and navigation. It's production-ready on day one — because authentication, hosting, security, and permissions are part of the platform, not part of the prompt.

From there, everything the AI builds is visually editable. Add a field, adjust a permission, update a workflow without re-prompting (and without risking breaking something that currently works). And when you genuinely need something custom (like a specialized visualization, a drag-and-drop scheduler, or a CSV importer with specific column mapping), the Vibe Coding block generates it as a contained, scoped component.

.webp)

The AI builds one block, not the entire application. It's a much more tractable problem for AI to get right, and it connects instantly to your existing data, theme, and permission system.

To reiterate: Use a solid, proven foundation for the parts that need to be solid. Use AI generation for the parts that need to be unique. The risks described in this article live mostly in the space between those two things, in the attempt to generate the foundation rather than inherit it.

👉 Try Softr free and pair proven no-code infrastructure with the speed and flexibility of vibe coding.

Frequently asked questions

- Does this mean vibe coding is a bad idea?

Not at all. For personal projects, prototypes, static pages, or tools used by a small trusted group, most of the risks here are minimal. Vibe coding is genuinely well-suited to those contexts. The concerns in this article apply specifically to apps with multiple users, business data, or ongoing maintenance requirements.

- What's the most common security issue in AI-generated apps?

Hardcoded API keys and overly permissive database configurations show up most often. A Veracode study found that 45% of LLM-generated code contains classic OWASP vulnerabilities. Running an explicit security audit prompt before going live, then cross-checking with a second model, catches most of them. It's a 20-minute step most people skip.

- Can I combine vibe coding with a platform like Softr?

Yes, and that's exactly the approach Softr is built for. The platform handles authentication, permissions, data, hosting, and standard UI blocks through proven infrastructure. For components that need to be genuinely custom, the Vibe Coding block generates a scoped React component that connects to your existing data and inherits your app's theme and permissions. You get the benefits of both without the risks of using either approach for the wrong job.

- When is vibe coding not enough for a business app?

When users have different roles and need to see different data. When the app handles information that belongs to clients or employees. When you need it to work correctly six months from now. When changes to one part of the app need to trigger actions elsewhere. These are the signals that you've moved from prototyping into territory where infrastructure matters.

- What is Softr?

Softr is an AI-native platform for building business apps without code. Teams use it to build client portals, internal tools, CRMs, company intranets, project trackers, and more. Over 1 million builders worldwide, including teams at Netflix, Google, Stripe, and UPS, run their operations on Softr.